Hundreds of satellites circle the Earth sending back petabytes of imagery and readings every day. Managing and extracting value from this data poses a range of technical challenges. Working with our friends at Sinergise and the European Space Agency’s phi-lab, we are building a toolset for applying artificial intelligence (AI) to satellite imagery at scale and in near real-time. This post demonstrates a method for rapid classification of land use and land cover (LULC) on Sentinel data. We accomplish this using Sentinel Hub, eo-learn, fastai, and our chip-n-scale prediction tools. To enable this workflow, we built a new utility, fastai-serving, that enables you to scale predictions on the cloud.

Rapid AI at Scale

Satellite imagery provides tremendous insights into our rapidly changing planet. Images and data cover the planet daily, and some programs have been collecting data for decades, providing value for understanding and tracking climate change, urban growth, power and transport availability, agricultural production, and much more. The volume, velocity, complexity, and sheer size of this data is more than humans can handle. AI is a critical tool for getting value from this data and makes it actionable. AI helps us to track rapidly evolving natural disasters, rapidly map power infrastructure, and to cheaply analyze decades of imagery to determine the impact of special economic zones.

Along with Sinergise, we are supporting the European Space Agency’s great work to unlock the power of ESA’s data to inform decision makers across all sectors. The Query Planet project imagines a day when non-technical users can rapidly query satellite data for insights. A critical part of that is powerful automation and artificial intelligence (AI). Last year, Sinergise released eo-learn, an open source python library for accessing Earth observation data and preparing it for AI and other data science uses. Development Seed is contributing to a set of AI tools that integrate seamlessly into ESA’s data infrastructure and platforms. These tools make it easier for ESA’s data users to construct fast AI pipelines that are automated, inexpensive to operate, and can operate at planetary scale.

Land use classification

Tracking changes in land use is essential for understanding urban changes, deforestation, and animal habitats. ESA’s Sentinel-2 satellites, in particular, are useful for this task because of their relatively high revisit rate, resolution, and multiple agriculture-focused wavelengths.

My colleague Zhuangfang NaNa Yi designed an approach to land classification that achieves very good results from a single date of observation and is easy to quickly run and scale. Her full walkthrough describes how to train a land use segmentation algorithm using only a single date of observation. This approach is often quicker and cheaper than alternative approaches that use data from multiple points in time. Multitemporal approaches can better account for noise and clouds and tend to do much better at distinguishing between certain agricultural and vegetation areas. Sinergise has demonstrated a great example of using multitemporal land-use classification using eo-learn.

However, single observation methods can be valuable for certain applications. Sometimes it is valuable to trade a small amount of accuracy for a much faster and less expensive result. This might be the case when scaling over a very large area, when doing initial exploration and hypothesis testing, or when responding to certain rapid onset disaster situations. Using less data and limited hyperparameter tuning allows for faster iterations while developing a model. Predictions on a single date are also valuable tracking land use change within a season. Urchn uses regular land use assessments as one input to flag areas where the map may be out of date.

Rapid Earth AI workflow

NaNa’s workflow optimizes for speed and efficiency. We leverage fastai, a machine learning framework that packages many modern machine practices for easier algorithm development. We use a pre-trained [dynamic Unet available in fastai](https://docs.fast.ai/vision.models.unet.html) to very quickly get the model running.

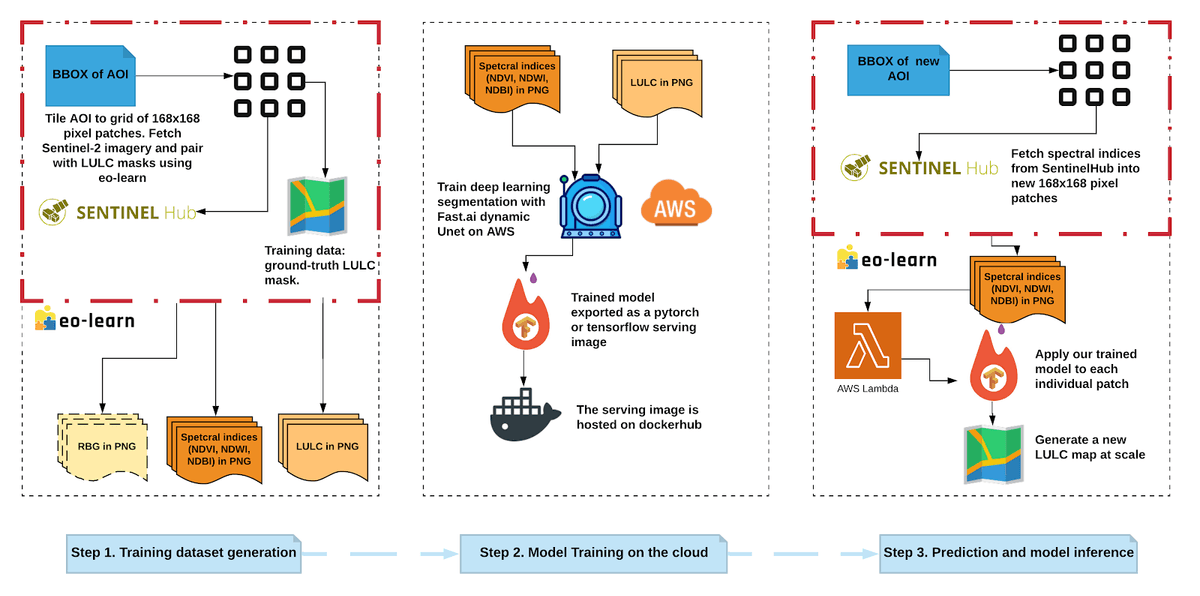

Figure 1. The deep learning pipeline that fetches and creates training data for LULC modeling on the cloud. It can be scaled up with our current open-source, cloud-based pipeline, chip-n-scale.

Figure 1. The deep learning pipeline that fetches and creates training data for LULC modeling on the cloud. It can be scaled up with our current open-source, cloud-based pipeline, chip-n-scale.

The pre-trained Unet is designed for three-channel (RGB) imagery. To accommodate this we need a way to reduce our imagery input from the original thirteen bands without losing too much spectral information. We use eo-learn to compute three band combinations (NDVI, NDWI, and NDBI) that carry good signal for Land Use and substitute these in place of the RGB channels. The eo-learn framework also served as the primary data acquisition and transformation framework prior to the data being used in fastai.

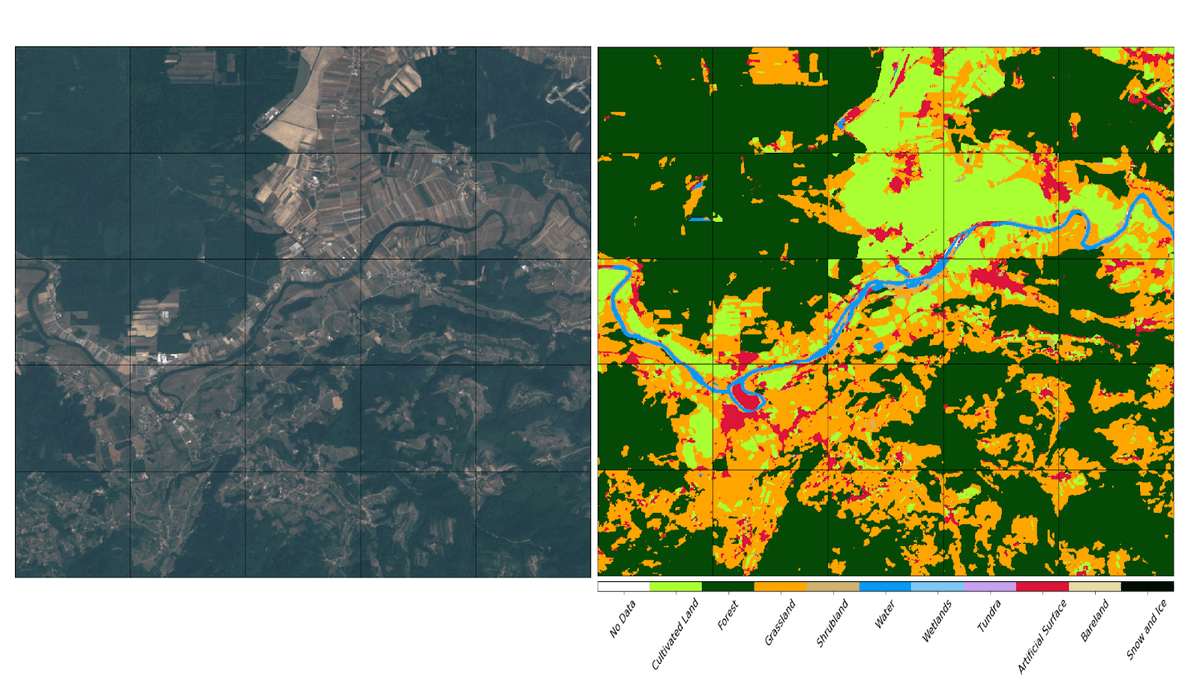

Figure 2. A UNet, applied ResNet50 as the encoder, was trained. The LULC prediction on the right, and the associated satellite imagery, in RGB, shown on the left.

Figure 2. A UNet, applied ResNet50 as the encoder, was trained. The LULC prediction on the right, and the associated satellite imagery, in RGB, shown on the left.

Inference at scale

Once the model was trained and tested over a sample area, we could use it to predict land use classification over a much larger area. In this case, we wanted to run our model over all of Slovenia in order to compare with the previous Sinergise model. Our standard approach for this type of problem is to use “chip-n-scale”. Chip-n-scale relies on having the model as a TensorFlow Serving Image. It was difficult to convert our model to this format, so we sought an alternative way to run this framework on fastai models. We released this work as fastai-serving: an API for running fastai models with the same API as TensorFlow serving (but without replicating the underlying functionality).

Result

Using this framework, we were able to run inference over all of Slovenia in under 15 minutes. Because of the inherent variability using a single date, our model is more susceptible to noise or clouds, and has a lower overall accuracy score (85% vs 94% pixels correctly labeled). We are happy with that result. We believe that the time and cost savings are a reasonable tradeoff in certain situations.

Reach out to Development Seed or to Sinergise if you are interested in making use of these tools or want to contribute to their development. Repeated thanks to Sinergise for their leadership in development of eo-learn, Sentinel Hub and associated tools and to ESA for the vision and financial support.

What we're doing.

Latest