A couple weeks ago, I talked at State of the Map US about our “skynet” experiments, using deep learning with satellite imagery and OpenStreetMap data to develop algorithms for automated mapping. Thanks to everyone who came to my talk — I’m really grateful for the positive feedback and excited by the great ideas that you shared!

The video and slides are available, but here’s the “tl;dr”:

Can deep learning get us to highly accurate and complete automated road detection?

Almost certainly.

Do you need to have hundreds of thousands of dollars and a dedicated research lab full of experts to do it?

Definitely not!

Here is the somewhat longer version.

Here is the somewhat longer version.

Conversations at State of the Map and afterwards made it clear that there is a lot of interest and activity right now around using computer vision and machine learning for mapping:

-

The DeepOSM team is using a lean, fast neural net to estimate the likelihood of “road registration errors” in OSM.

-

Facebook gave us some good insight into their internal efforts at automated mapping.

-

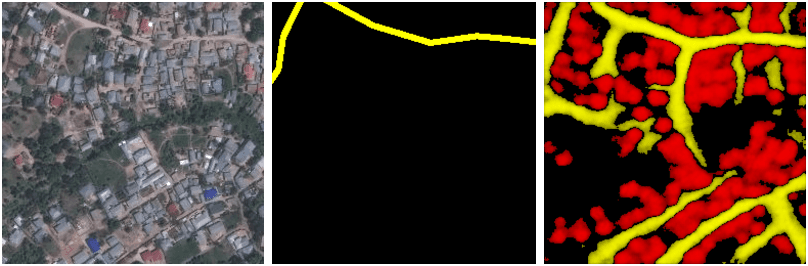

Dale Kunce suggested that Dar es Salaam has been sufficiently mapped by the Missing Maps effort to provide good training data. As soon as I got home I started working with this and have already been getting promising results in East Africa:

-

(left: input image, © Mapbox Satellite; middle: OpenStreetMap data; right: our model).

-

We had some great conversations with the World Bank on how to apply this to their own efforts.

-

There were thoughtful (and mildly controversial) reflections in blog posts from Mike Migurski and Tom Lee about the broader implications of “robot mapping.”

There’s much more to do here, but it finally feels like deep learning is starting to become accessible to us as a community interested in real, practically useful results. Within the next few weeks, a few people working on machine learning for road mapping are going to jump on a hangout. If you are interested to join, please ping me on twitter. I’m excited to see what we can build.

Meanwhile, we’ve been working to clean up the code and docs from our experiments, in the hopes that others in the community can replicate our results and take them farther. Check out the readmes for skynet-data and skynet-train. If we did it right, you should be able to start training your own neural net to extract features from satellite imagery with little more than an AWS account, and an hour of setup, and 🐳docker run .... If not, drop us a line, or open an issue or PR!

What we're doing.

Latest